注意:此图像分类课程已弃用,将于 2025 年 12 月 15 日删除。

Google uses AI technology to translate content into your preferred language. AI translations can contain errors.

Google uses AI technology to translate content into your preferred language. AI translations can contain errors.

机器学习实践:图像分类

使用集合让一切井井有条

根据您的偏好保存内容并对其进行分类。

防止过拟合

与所有机器学习模型一样,训练卷积神经网络时会遇到的一个关键问题是过拟合:模型紧密拟合训练数据的具体特征,以至于无法泛化到新样本。构建 CNN 时,您可以通过以下两种方法来防止出现过拟合:

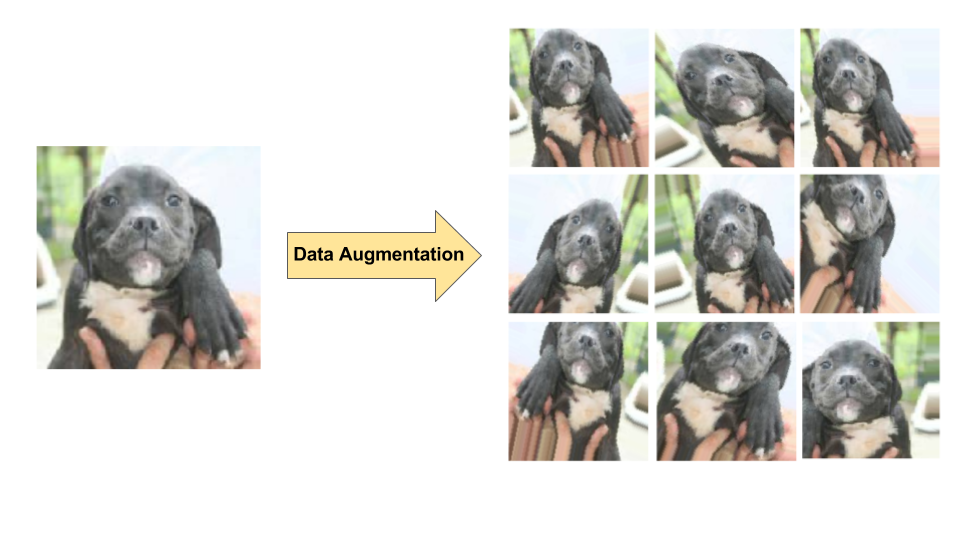

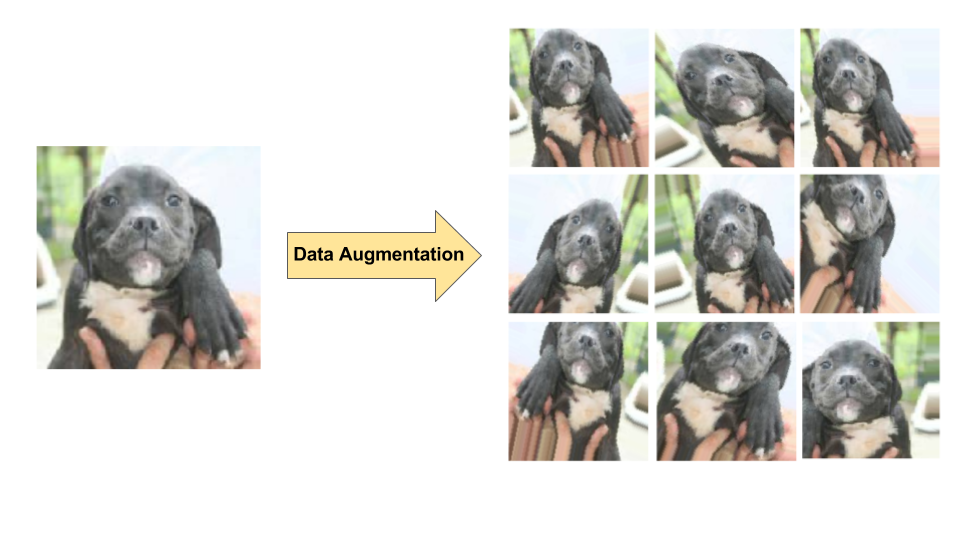

- 数据增强:通过随机转换现有图像生成一组新的图像,人为地增加训练样本的多样性和数量(参见图 7)。当原始训练数据集相对较小时,数据增强方法尤为有用。

- dropout 正规化:在一个训练梯度步长中随机地从神经网络中移除一些单元。

图 7. 单张狗狗图像的数据增强(摘自 Kaggle 上的“Dogs vs. Cats”数据集)。左图:训练集中的原始狗狗图像。右图:对原始图像进行随机转换后生成的九张新图。

图 7. 单张狗狗图像的数据增强(摘自 Kaggle 上的“Dogs vs. Cats”数据集)。左图:训练集中的原始狗狗图像。右图:对原始图像进行随机转换后生成的九张新图。

如未另行说明,那么本页面中的内容已根据知识共享署名 4.0 许可获得了许可,并且代码示例已根据 Apache 2.0 许可获得了许可。有关详情,请参阅 Google 开发者网站政策。Java 是 Oracle 和/或其关联公司的注册商标。

最后更新时间 (UTC):2025-01-18。

[null,null,["最后更新时间 (UTC):2025-01-18。"],[],[]]

图 7. 单张狗狗图像的数据增强(摘自 Kaggle 上的“Dogs vs. Cats”数据集)。左图:训练集中的原始狗狗图像。右图:对原始图像进行随机转换后生成的九张新图。

图 7. 单张狗狗图像的数据增强(摘自 Kaggle 上的“Dogs vs. Cats”数据集)。左图:训练集中的原始狗狗图像。右图:对原始图像进行随机转换后生成的九张新图。