多模态提示是适用于大型语言模型 (LLM) 的一种提示,其中包含多种输入类型。格式是指文本或图像等输入。支持多模态提示和 LLM 可以实现各种有趣的应用场景,包括图片分类、手写识别、翻译和其他创意场景。

在本文档中,我们将通过探索 8 个有趣的示例,重点介绍在 Gemini 模型中输入文本和图片并收到纯文字回复时可以实现的提示类型。

什么是多模态提示?

目前,Gemini 现在可以通过混合文本和/或图片输入来提示,并返回纯文本回复。文本可用于为图片提供上下文,也可以用于请求模型操作或生成有关图片的响应。

例如,下面是一个多模态提示:

“猫咪是什么颜色?”

此提示中的文本阐明了图片中有一只猫,并且我们主要关注的是这只猫以及猫的颜色,从而提供了图片的上下文。

3 个简单的多模态提示

以下是三个非常简单的示例,它们展示了如何向 LLM 提供图片和文字,以及接受纯文字回复。我们将探讨识别图片中某类实体的概念,在无需预先确定知识的情况下对图片中的实体进行分类,最后介绍将识别与计算结合使用的示例。

分类

“此图片包含猫吗?请回答 true 或 false。”

Gemini 的回复:

True

这是一个非常简单的示例,突显了 LLM 能够识别图片中是否存在某些内容,以及以布尔值方式回复开发者的能力。此方法对于检测用于过滤或其他目的的特定内容非常有用。

值得注意的是,专用的图像识别模型可能在非常具体的任务方面更为有效。不过,创建自定义机器学习模型需要时间和专业知识。使用 Gemini 的解决方案可以更快地构建。 因此,请务必比较不同模型之间可用响应的质量,并根据这些权衡做出决定。

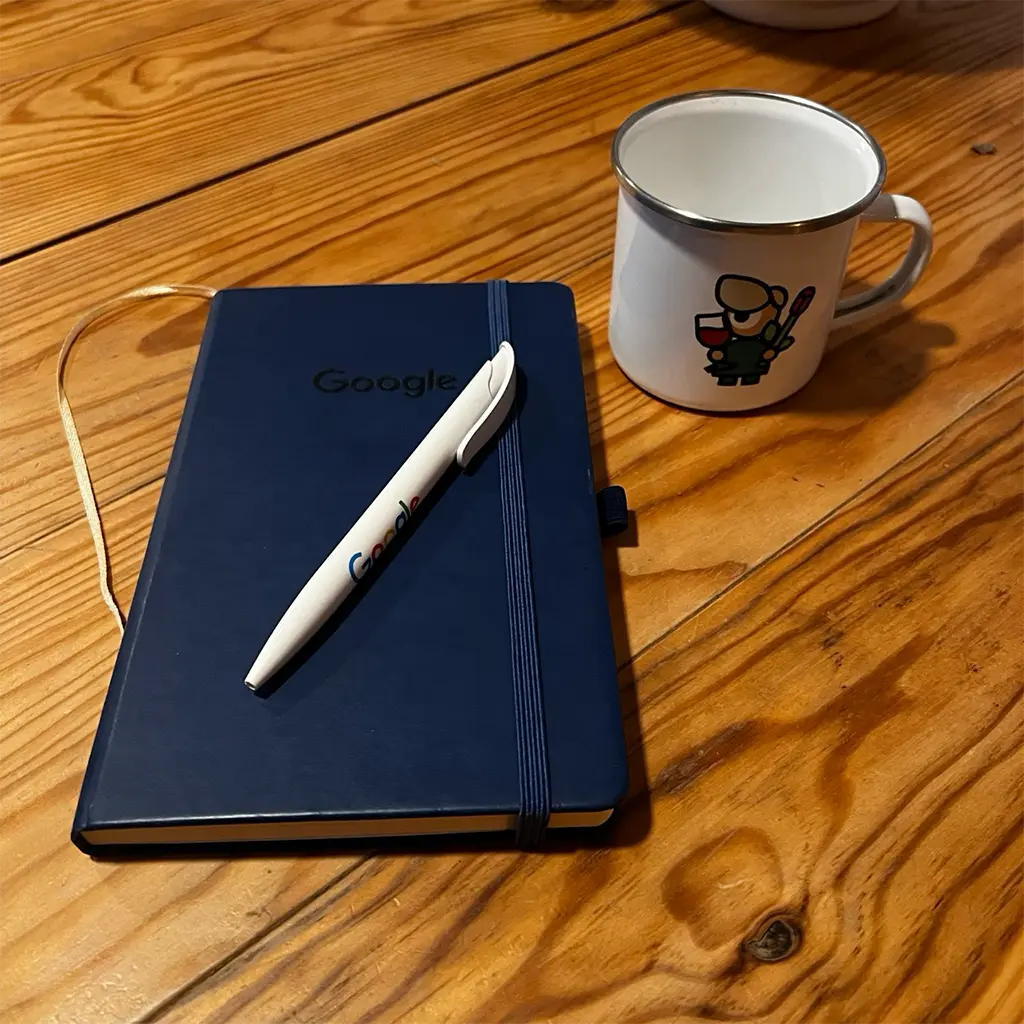

认可

“给我列出这张图片中的所有重要内容。”

Gemini 的回复:

- Google 笔记本

- 一支 Google 签字笔

- 一个马克杯

上面的示例强调了一个事实,我们可以就图片中显示的内容向 LLM 提出开放式问题。使用 Gemini,图片分类过程不需要针对不同类型的对象(例如图书或马克杯)使用不同的模型。相反,我们使用单个模型通过单次传递确定了所有重要元素。

计数

“计算这张图片中的猫的数量。请只提供响应中的最后一个数字。

只需返回给我一个电话号码”

Gemini 的回复:

3

在此示例中,我们仅包含对特定类型的对象的请求,并指定希望将其计入图片。此外,我们已要求在提示中包含纯数字响应。不过,根据图片中需要统计的对象数量,其质量可能会有所不同。由于 Gemini 是一个通用的多模态模型,因此除较小的数字之外,其精度可能会受到限制。

5 种高级多模态提示

接下来,我们将介绍一些有趣的示例,介绍利用文本和图片输入的多模态提示的更高级方式。

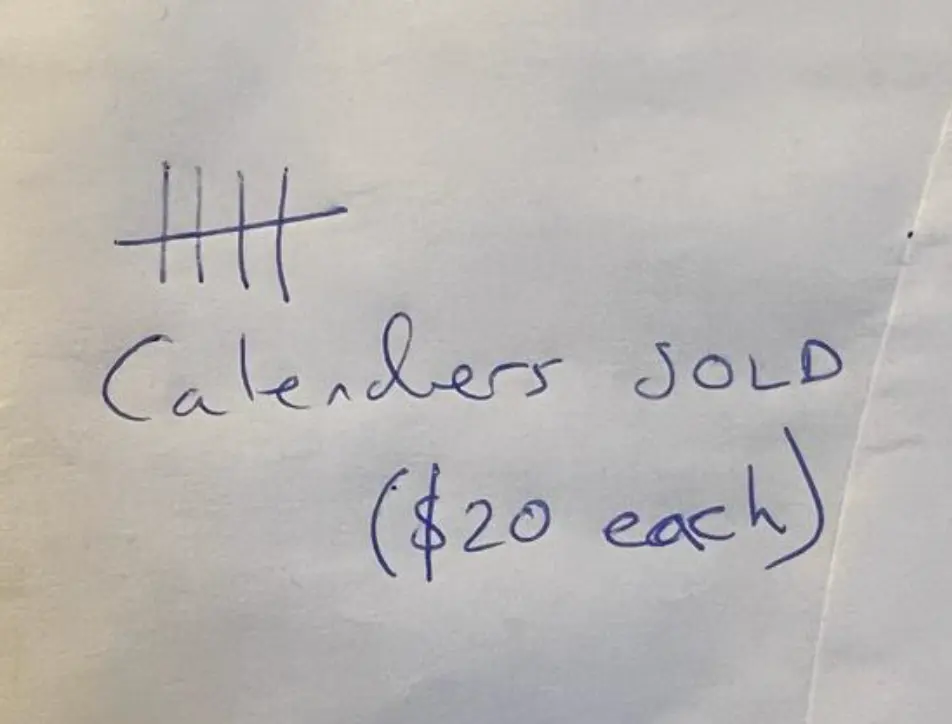

文字识别、推理与计算

“我们今天总共赚了多少钱?请解释您的理由。”

Gemini 的回复:

今天赚取的总金额为 100 美元。

这是因为备注显示有 5 个日历的售价分别是 20 美元。 5 x 20 美元 = 100 美元。

这种更高级的提示和图片会同时演示 Gemini 的多个功能。首先是手写识别,AI 已经认识到用英文书写的文字提到了日历的售出数量以及每个日历的售价。此外,根据提示表明统计数字的概念,AI 已经识别出了 5 点式记分的表示法(即 4 条垂直线和一条水平删除线)。最后,通过提示引导 AI 完成计算步骤,我们能够获得关于 AI 如何计算收入的演示。

世界背景和推理

“24 小时制的这张照片中的时间是几点。”

Gemini 的回复:

19:30

此示例突显了模型识别场景光线以及从特定时间范围内的图像推断时间信息的能力。

解说与创造力

“写一首关于这张照片的俳句诗。”

Gemini 的回复:

湖边的长椅

远处的山景,

片刻宁静。

在这里,我们看到了 Gemini 的强大力量来讲故事,以及以更具创造性的方式使用生成式 AI。通过提供图片作为起点,我们可以惊讶地发现 AI 呈现故事情节的位置,或者 AI 决定将图片中的哪些特征融入故事中。用于生成故事的纯文字提示通常仅限于我们自己的初始想法,但真实的照片可以作为 AI 思考过程的有力起点。

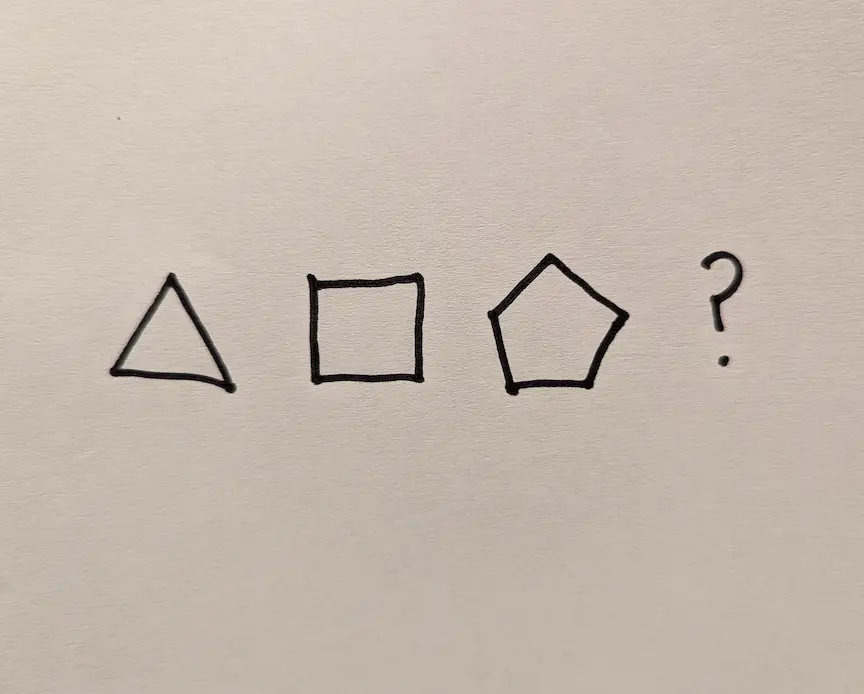

逻辑推进

“接下来做什么?请说明您的理由”

Gemini 的回复:

三角形有 3 条边,正方形有 4 条边,五边形有 5 条边。每个形状的边数增加 1。因此,下一个形状应该有 6 个边,即六边形。

这个例子再次融合了 Gemini 的多种技能。它不仅能识别形状,也能理解这些形状虽然绘制得略显粗略,但却是具有特定属性(例如 3 条边、4 条边、5 条边)的不同数学绑定形状。

除此之外,问号的出现不会使 Gemini 混淆它对几何形状的逻辑进程的解释。相反,Gemini“看到”这是从 3、4 到 5 的数学进展,因此最后一个形状是 6 边形,完美地提出了一个六边形。

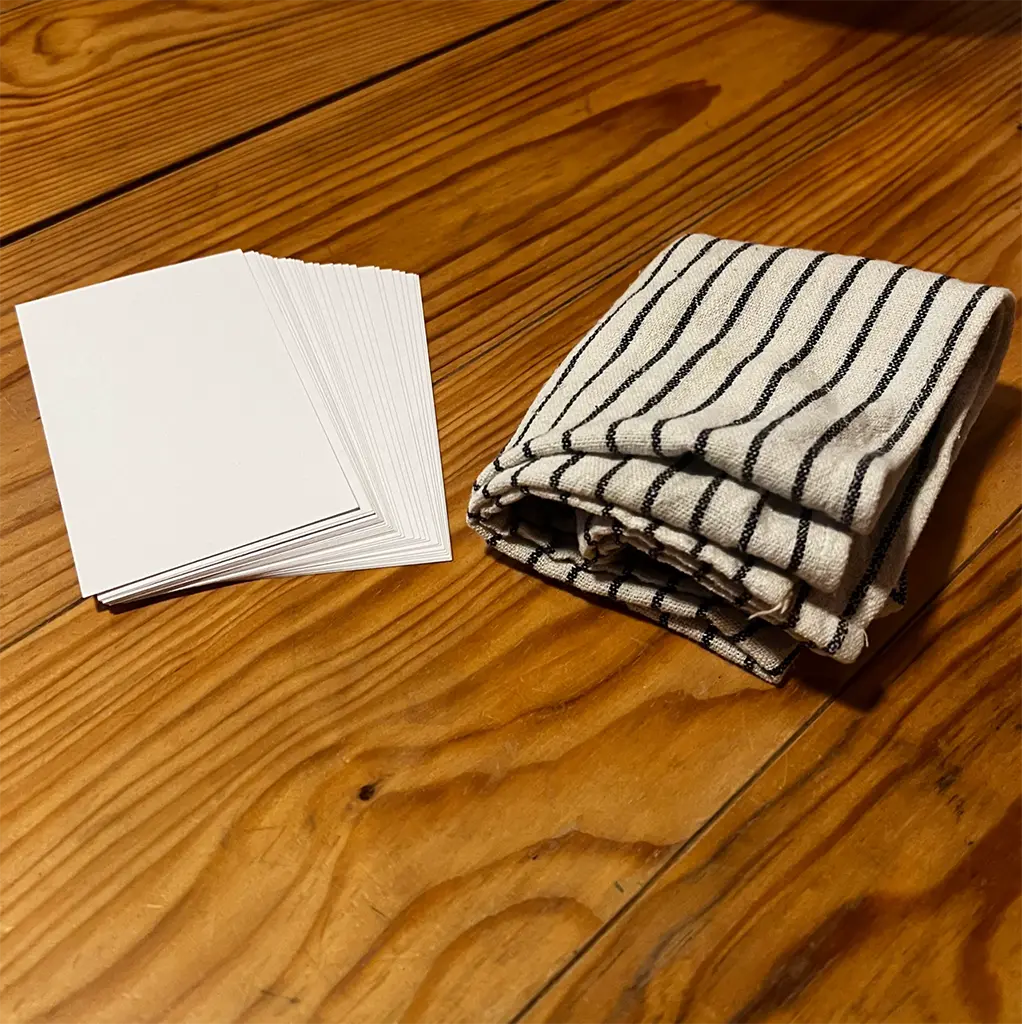

世界解读与理解

以下哪一项更适合写字? 哪种更适合做围巾?

Gemini 的回复:

纸张更适合书写,因为它的表面光滑,墨水不会渗透。面料更适合制作围巾,因为它比较柔软,可以保暖。

在这里,我们将以一种非常有创造性的方式利用 Gemini。AI 不仅可以理解图像,还能根据看到的内容推断属性和现实世界的物理特征。推理基于对物体材料的直观理解,以及它们如何与提示中概述的用途互动。

总结

以上只是使用多模态提示的几种方式的示例。 借助 LLM,您可以使用多模态提示不仅可以理解文本和图片,还可以形成新想法或从中汲取理解。我们建议您尝试不同类型的多模态提示,看看您能创建什么。