开发者解决方案和架构

Androidify:构建一款现代化的 AI 赋能型 Android 应用

使用这款现代 Android 应用,通过自拍 + AI 将自己 Android 化。该应用结合了 Jetpack Compose(用于打造出色的界面)、Gemini 和 Firebase(用于实现 AI 驱动的功能)以及 CameraX(用于打造顺畅的相机体验),旨在适应各种设备。

利用 Firebase Data Connect 和 SQL 数据库构建接地 AI 代理

探索一种全栈架构,该架构将 Next.JS 前端与 SQL 数据库和 Firebase Data Connect 后端相结合,使用 Genkit 代理、向量搜索和检索增强生成 (RAG) 来生成智能的、数据驱动的回答。

Living Canvas:一款基于网络的生成式 AI 益智游戏

构建动态 Web 体验,使用 Gemini、Imagen 和 Veo 实时响应用户的绘画。探索将 Gemini、Functions 和 Firestore 后端与 Firebase Hosting 上的 Angular 和 PhaserJS 集成的架构。

AI Barista:智能体应用的端到端架构

使用 Firebase 和 Google Cloud 构建代理式体验。探索由 Genkit 提供支持的智能体,这些智能体可以响应多模态用户输入、使用工具调用来编排复杂的任务,并包含人机协同流程。

Compass:由代理提供支持的旅行规划应用,采用生成式 AI 技术

探索基于 GenKit 和 Flutter 的架构,以构建可将 AI 输入与检索增强生成 (RAG) 无缝集成的多平台应用。

为 Android 构建 AI 赋能的备餐应用

了解如何在 Android Studio、Firebase 和 Google 技术中使用 Gemini 来构建引人入胜的 Android 应用。

使用 Gemini API、Flutter 和 Firebase 创建多人版填字游戏

了解 Google 工程团队如何使用 Gemini、Flutter 和 Firebase 创建多人版填字游戏。

Gemini API 和 Web 应用使用入门

了解如何使用 Gemini API 和 Google Gen AI SDK 为 Web 应用开发生成式 AI 原型。

在游戏开发中使用 Gemini 和 Gemma 生成式 AI

了解如何使用 Gemini AI 和 Gemma 模型在游戏开发的不同阶段(从前期制作到游戏内解决方案)中使用生成式 AI。

利用 Gemini Pro Vision 模型提供图片理解、多模态提示和无障碍功能

了解如何使用 Gemini 模型的多模态功能分析 HTML 文档和图片文件,以便在 NodeJS 脚本中向网页添加无障碍说明。

使用 Python、Cloud Run、Cloud SQL 和 Firebase 构建的无服务器电子商务 Web 应用

了解如何使用 Django 和 Cloud Run 后端、Cloud SQL 数据存储空间以及 Firebase 构建现代无服务器电子商务 Web 应用。

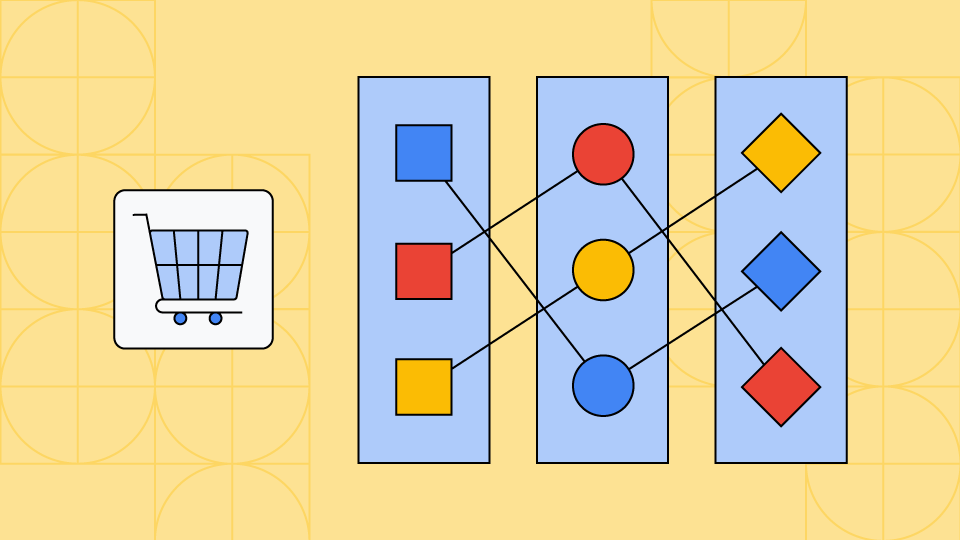

基于 Kubernetes 的微服务型电子商务 Web 应用

了解如何在 Kubernetes 上使用微服务构建分布式、可扩缩的电子商务 Web 应用。

采用 Cloud Run 的现代三层式架构 Web 应用

了解如何构建一款包含多个层级的 Web 应用,该应用使用基于 Cloud Run 运行的 Golang 后端和 CloudSQL 数据库。