Conclusion

Fairness is not a one-time goal to be achieved; it's an ongoing effort. Here's more on Jigsaw's continuing work to mitigate bias in its

Perspective API models.

Learn more about ML Fairness

Continue your ML Fairness education with these resources

MLCC Fairness Self-Study

This one-hour self-study course introduces the fundamental concepts of ML Fairness, including common sources of bias, how to identify bias in data, and how to evaluate model predictions with fairness in mind.

ML Glossary

The ML Glossary contains over 30 ML Fairness entries, which provide beginner-friendly definitions as well as examples of common biases, key fairness evaluation metrics, and more.

Incorporate Fairness into your ML workflows

Use the following tools to help identify and remediate bias in ML models

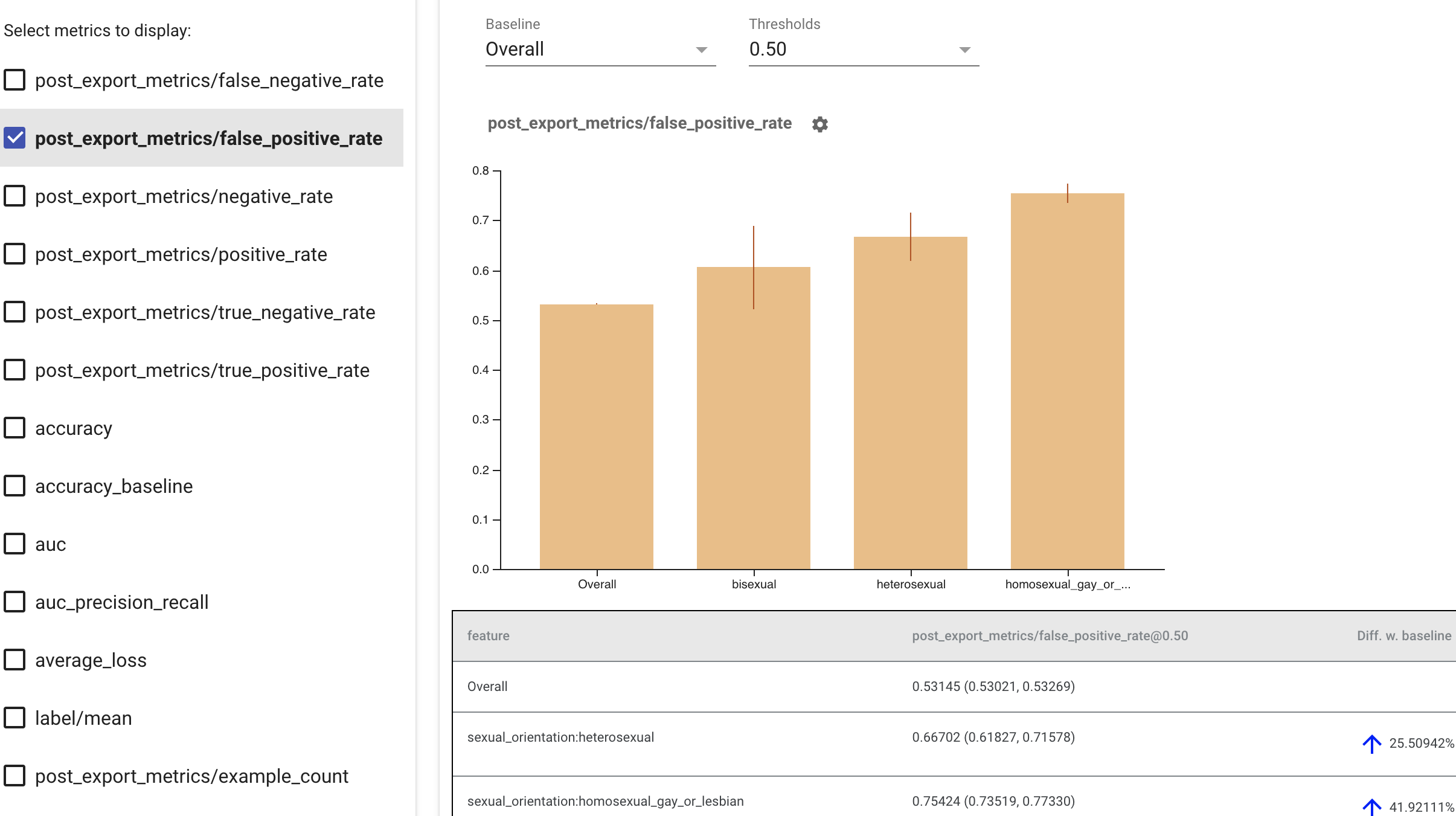

Fairness Indicators

Fairness Indicators is a visualization tool powered by TensorFlow Model Analysis (TFMA) that evaluates model performance across subgroups

and then graphs results for a variety of popular metrics, including false positive rate, false negative rate, precision, and recall.

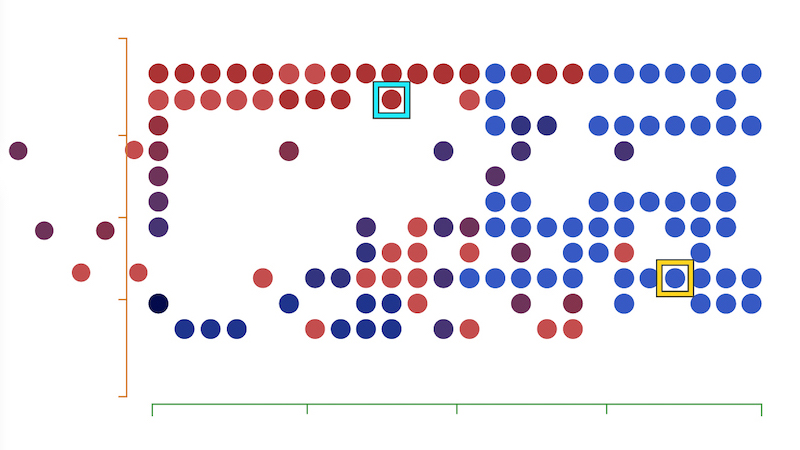

What-If Tool

The What-If tool is an interactive visual interface designed to help you probe your models better. Investigate model performance for a range of features in your dataset using different optimization strategies, and explore the impact of manipulating individual datapoint values.