PageSpeed Insights (PSI) 可提供有关网页在移动设备和桌面设备上的用户体验的报告 并提供有关如何改进该页面的建议。

PSI 会同时提供有关网页的实验室数据和实测数据。实验室数据有助于调试 因为它们是在受控环境中收集的。但是,它可能无法找出实际使用中的性能瓶颈问题。现场数据有助于捕获真实的用户体验,但指标更有限。了解思维方式 速度工具简介,详细了解这两类数据。

真实用户体验数据

PSI 中的真实用户体验数据由 Chrome 用户体验报告 (CrUX) 数据集提供支持。PSI 会报告过去 28 天收集期间真实用户的 First Contentful Paint (FCP)、Interaction to Next Paint (INP)、Largest Contentful Paint (LCP) 和 Cumulative Layout Shift (CLS) 体验。PSI 也会报告 对实验性指标 Time to First Byte (TTFB) 的评估。

为了显示给定网页的用户体验数据,必须有足够的数据可纳入 CrUX 数据集中。如果网页是最近发布的,或者来自真实用户的样本太少,则可能没有足够的数据。在这种情况下,PSI 会下降 原始级别,即原始网页的所有网页 网站。有时,来源的数据可能也不够多,在这种情况下,PSI 将无法显示任何真实用户体验数据。

评估体验的质量

PSI 会将用户体验质量分为三类:“良好”“有待改进”或“不佳”。PSI 根据 网页指标计划:

| 良好 | 需要改进 | 差 | |

|---|---|---|---|

| 首次内容渲染 (FCP) | [0, 1800 毫秒] | (1800 毫秒、3000 毫秒] | 超过 3000 毫秒 |

| LCP | [0,2500 毫秒] | [2500 毫秒, 4000 毫秒] | 超过 4000 毫秒 |

| CLS | [0, 0.1] | (0.1, 0.25] | 高于 0.25 |

| INP | [0, 200 毫秒] | (200 毫秒、500 毫秒] | 超过 500 毫秒 |

| TTFB(实验性) | [0, 800 毫秒] | [800 毫秒, 1800 毫秒] | 超过 1800 毫秒 |

分布情况和所选指标值

PSI 会显示这些指标的分布情况,以便开发者了解相应网页或来源的体验范围。此分布分为三个类别:“良好”“需要改进”和“差”,分别用绿色、黄色和红色条柱表示。 例如,如果 LCP 的黄色条内显示 11%,则表示所有观察到的 LCP 值中有 11% 介于 2500 毫秒到 4000 毫秒之间。

PSI 会在分布条上方报告所有指标的 75 分位数。选择第 75 个百分位,以便开发者了解其网站上最令人沮丧的用户体验。这些字段指标值会应用上述相同的阈值,分类为“良好”“有待改进”或“不佳”。

核心网页指标

“核心网页指标”是一组至关重要的性能信号 所有 Web 体验。Core Web Vitals 指标包括 INP、LCP 和 CLS,可在网页级别或源级别汇总。对于在所有三个指标中都具有足够数据的汇总,如果所有三个指标的第 75 百分位数均为“良好”,则汇总通过 Core Web Vitals 评估。否则,聚合将无法通过评估。如果 如果汇总过程的 INP 数据不足,则在汇总的第 75 个 LCP 和 CLS 百分位数表示良好。如果 LCP 或 CLS 数据不足,则无法评估网页级或来源级汇总数据。

PSI 和 CrUX 中实测数据的差异

PSI 中的字段数据与 BigQuery 中的 CRUX 数据集之间的区别在于,PSI 中的数据每天更新一次,而 BigQuery 数据集每月更新一次,并且仅限来源级数据。 这两个数据源都代表过去 28 天的时间段。

实验室诊断

PSI 使用 Lighthouse 在模拟环境中分析指定的网址, “效果”“无障碍功能”“最佳做法”和“搜索引擎优化”类别

得分

此部分的顶部是每个类别的得分,该得分由运行 Lighthouse 计算得出 来收集和分析网页的诊断信息。90 或更高的得分被视为较高分数。50 到 89 分表示需要改进,低于 50 分则被视为欠佳。

指标

“性能”类别还包含网页在不同指标方面的表现,包括:First Contentful Paint、Largest Contentful Paint、Speed Index、Cumulative Layout Shift、Time to Interactive 和 Total Blocking Time。

每个指标都会进行评分并标有图标:

- 商品用绿色圆圈表示

- “需要改进”用琥珀色信息方块表示

- 差评价由红色警告三角形表示

审核

每个类别中都包含审核,其中会提供有关如何改善网页用户体验的信息。如需详细了解 每个类别的审核明细。

常见问题解答 (FAQ)

Lighthouse 使用什么设备和网络条件来模拟网页加载?

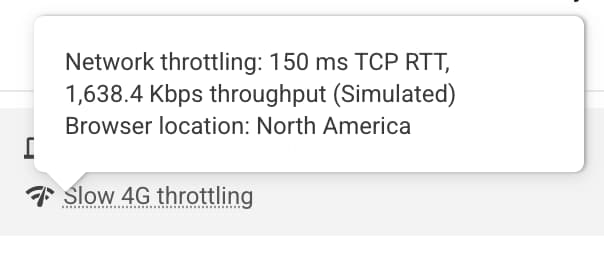

目前,Lighthouse 模拟中层设备 (Moto G4) 设备的网页加载条件 移动用户所在的移动网络;以及 通过有线连接在桌面设备上完成模拟。PageSpeed 还会在 Google 数据中心运行,该数据中心可能会因网络状况而异。您可以查看 Lighthouse 报告的环境块,了解测试的位置:

注意:PageSpeed 会报告在北美洲、欧洲或亚洲运行。

为什么现场数据和实验室数据有时会相互矛盾?

字段数据是关于特定网址执行情况的历史报告,表示 匿名化效果数据,来自于现实世界用户在各种设备和网络上的 条件。实验室数据基于在单个设备上模拟网页加载,并采用一组固定的网络条件。因此,这些值可能会有所不同。 请参阅为什么实验数据和实测数据可能不同 (以及如何应对)。

为什么为所有指标选择第 75 个百分位数?

我们的目标是确保网页能够为大多数用户正常运行。通过关注指标的第 75 个百分位数值,可确保网页在最严苛的设备和网络条件下提供良好的用户体验。 如需了解详情,请参阅定义 Core Web Vitals 指标阈值。

实验数据的高分是多少?

任何绿色得分(90+ 分)都被视为良好,但请注意,拥有优质的实验室数据并不意味着 真实用户体验也必不可少。

为什么每次运行时效果评分都会发生变化?我没有更改页面上的任何内容!

效果衡量的变化是通过 影响程度不同的渠道的数量。指标变异性的几个常见来源包括本地网络可用性、客户端硬件可用性和客户端资源争用。

为什么网址或来源不提供真实用户的 CrUX 数据?

Chrome 用户体验报告会汇总选择启用该功能的用户的实际速度数据,并且要求网址必须是公开网址(可抓取和编入索引),并且有足够数量的不同样本,以便以匿名方式提供对网址或来源性能的代表性视图。

More questions?

如果您想就 PageSpeed Insights 咨询具体问题以获得答复,请在 Stack Overflow 上用英语提问。

如果您有关于 PageSpeed Insights 的一般反馈或疑问,或者想要开始 一般讨论,请在邮寄名单中发起会话。

如果您对网页指标有一般性疑问,请在 web-vitals-feedback 讨论组中发起会话。

反馈

此页内容对您有帮助吗?