앱에서 증강 얼굴 기능을 사용하는 방법을 알아보세요.

기본 요건

기본 AR 개념을 이해합니다. ARCore 세션을 구성하는 방법을 알아보세요.

Android에서 증강 얼굴 사용하기

ARCore 세션 구성

기존 ARCore 세션에서 전면 카메라를 선택하여 증강 얼굴 사용을 시작합니다. 참고: 전면 카메라를 선택하면 다양한 변경사항이 ARCore 동작에 영향을 줍니다.

자바

// Set a camera configuration that usese the front-facing camera. CameraConfigFilter filter = new CameraConfigFilter(session).setFacingDirection(CameraConfig.FacingDirection.FRONT); CameraConfig cameraConfig = session.getSupportedCameraConfigs(filter).get(0); session.setCameraConfig(cameraConfig);

Kotlin

// Set a camera configuration that usese the front-facing camera. val filter = CameraConfigFilter(session).setFacingDirection(CameraConfig.FacingDirection.FRONT) val cameraConfig = session.getSupportedCameraConfigs(filter)[0] session.cameraConfig = cameraConfig

AugmentedFaceMode를 사용 설정합니다.

자바

Config config = new Config(session); config.setAugmentedFaceMode(Config.AugmentedFaceMode.MESH3D); session.configure(config);

Kotlin

val config = Config(session) config.augmentedFaceMode = Config.AugmentedFaceMode.MESH3D session.configure(config)

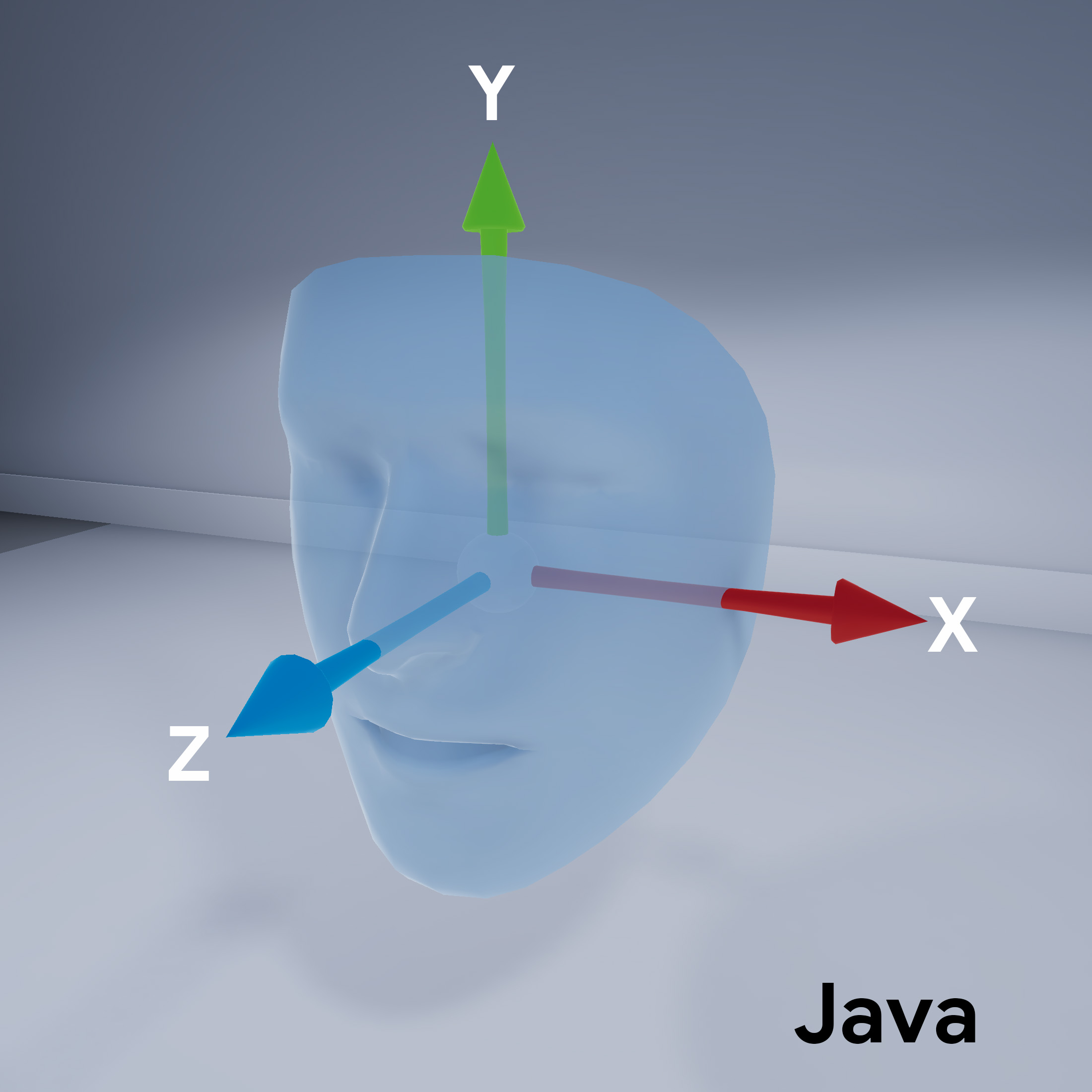

얼굴 메시 방향

페이스 메시의 방향에 유의하세요.

감지된 얼굴에 액세스

Trackable 가져오기

확인할 수 있습니다 Trackable는 ARCore가 추적할 수 있는 항목이며

앵커를 연결할 수 있습니다.

자바

// ARCore's face detection works best on upright faces, relative to gravity. Collection<AugmentedFace> faces = session.getAllTrackables(AugmentedFace.class);

Kotlin

// ARCore's face detection works best on upright faces, relative to gravity. val faces = session.getAllTrackables(AugmentedFace::class.java)

TrackingState 다운로드

(각 Trackable). TRACKING인 경우

현재 ARCore에서 그 포즈를 알고 있습니다.

자바

for (AugmentedFace face : faces) { if (face.getTrackingState() == TrackingState.TRACKING) { // UVs and indices can be cached as they do not change during the session. FloatBuffer uvs = face.getMeshTextureCoordinates(); ShortBuffer indices = face.getMeshTriangleIndices(); // Center and region poses, mesh vertices, and normals are updated each frame. Pose facePose = face.getCenterPose(); FloatBuffer faceVertices = face.getMeshVertices(); FloatBuffer faceNormals = face.getMeshNormals(); // Render the face using these values with OpenGL. } }

Kotlin

faces.forEach { face -> if (face.trackingState == TrackingState.TRACKING) { // UVs and indices can be cached as they do not change during the session. val uvs = face.meshTextureCoordinates val indices = face.meshTriangleIndices // Center and region poses, mesh vertices, and normals are updated each frame. val facePose = face.centerPose val faceVertices = face.meshVertices val faceNormals = face.meshNormals // Render the face using these values with OpenGL. } }