了解如何在您自己的应用中使用 Scene Semantics API。

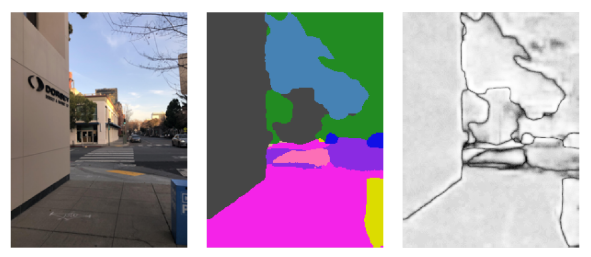

借助 Scene Semantics API,开发者可以通过提供基于机器学习模型的实时语义信息来了解用户周围的场景。给定一张户外场景图片,该 API 会针对一组实用的语义类别(例如天空、建筑物、树木、道路、人行道、车辆、人等)为每个像素返回一个标签。除了像素标签之外,Scene Semantics API 还会为每个像素标签提供置信度值,并提供一种简单易用的方式来查询给定标签在户外场景中的普遍性。

从左到右:输入图片示例、像素标签的语义图像和相应的置信度图像:

前提条件

在继续操作之前,请确保您了解基本 AR 概念以及如何配置 ARCore 会话。

启用 Scene Semantics

在新的 ARCore 会话中,检查用户的设备是否支持 Scene Semantics API。由于处理能力限制,并非所有 ARCore 兼容设备都支持 Scene Semantics API。

为了节省资源,Scene Semantics 在 ARCore 上默认处于停用状态。启用语义模式,以便您的应用使用 Scene Semantics API。

Java

Config config = session.getConfig(); // Check whether the user's device supports the Scene Semantics API. boolean isSceneSemanticsSupported = session.isSemanticModeSupported(Config.SemanticMode.ENABLED); if (isSceneSemanticsSupported) { config.setSemanticMode(Config.SemanticMode.ENABLED); } session.configure(config);

Kotlin

val config = session.config // Check whether the user's device supports the Scene Semantics API. val isSceneSemanticsSupported = session.isSemanticModeSupported(Config.SemanticMode.ENABLED) if (isSceneSemanticsSupported) { config.semanticMode = Config.SemanticMode.ENABLED } session.configure(config)

获取语义图像

启用场景语义后,即可检索语义图像。语义图像是 ImageFormat.Y8 图像,其中每个像素都对应于 SemanticLabel 定义的语义标签。

使用 Frame.acquireSemanticImage() 获取语义图像:

Java

// Retrieve the semantic image for the current frame, if available. try (Image semanticImage = frame.acquireSemanticImage()) { // Use the semantic image here. } catch (NotYetAvailableException e) { // No semantic image retrieved for this frame. // The output image may be missing for the first couple frames before the model has had a // chance to run yet. }

Kotlin

// Retrieve the semantic image for the current frame, if available. try { frame.acquireSemanticImage().use { semanticImage -> // Use the semantic image here. } } catch (e: NotYetAvailableException) { // No semantic image retrieved for this frame. }

在会话开始后大约 1-3 帧后,系统应可输出语义图像,具体取决于设备。

获取置信度图片

除了为每个像素提供标签的语义图像之外,该 API 还提供包含相应像素置信度值的置信度图像。置信度图像是 ImageFormat.Y8 图像,其中每个像素对应于 [0, 255] 范围内的值,该值与每个像素的语义标签相关联的概率相对应。

使用 Frame.acquireSemanticConfidenceImage() 获取语义置信度图像:

Java

// Retrieve the semantic confidence image for the current frame, if available. try (Image semanticImage = frame.acquireSemanticConfidenceImage()) { // Use the semantic confidence image here. } catch (NotYetAvailableException e) { // No semantic confidence image retrieved for this frame. // The output image may be missing for the first couple frames before the model has had a // chance to run yet. }

Kotlin

// Retrieve the semantic confidence image for the current frame, if available. try { frame.acquireSemanticConfidenceImage().use { semanticConfidenceImage -> // Use the semantic confidence image here. } } catch (e: NotYetAvailableException) { // No semantic confidence image retrieved for this frame. }

从会话开始大约 1-3 帧后,系统应会输出置信度图片,具体取决于设备。

查询语义标签的像素所占的比例

您还可以查询当前帧中属于特定类(例如天空)的像素所占的比例。与返回语义图片并按像素搜索特定标签相比,此查询更高效。返回的部分是一个介于 [0.0, 1.0] 范围内的浮点值。

使用 Frame.getSemanticLabelFraction() 获取给定标签的百分比:

Java

// Retrieve the fraction of pixels for the semantic label sky in the current frame. try { float outFraction = frame.getSemanticLabelFraction(SemanticLabel.SKY); // Use the semantic label fraction here. } catch (NotYetAvailableException e) { // No fraction of semantic labels was retrieved for this frame. }

Kotlin

// Retrieve the fraction of pixels for the semantic label sky in the current frame. try { val fraction = frame.getSemanticLabelFraction(SemanticLabel.SKY) // Use the semantic label fraction here. } catch (e: NotYetAvailableException) { // No fraction of semantic labels was retrieved for this frame. }