2010年2月5日星期五

作者:Michael Wyszomierski, 网站质量小组

网站站长级别:全体

如果您允许用户在您的网站上发表内容

,如

留下评论

和

创建用户配置文件

,那么您可能会看到,垃圾留言散播者试图利用这些渠道来给他们自己的网站创造流量。在您的网站上出现这类垃圾留言,对任何人来说都不愉快。用户可能遭遇恼人的广告,将他们引向含有欺诈内容或恶意软件的低俗或危险网站。而您作为网站站长,则可能因为违反

网站管理员质量指南

的内容,影响您的网站在搜索结果中的位置。

有一些方法可以解决这个问题,如审核评论和审查新用户账户,但垃圾留言往往数量庞大,来不及审查。垃圾留言能够轻易达到难以控制的数量,因为大部分垃圾留言并非由其散播者手动创建。相反,垃圾留言散播者利用名为“

bots

”的计算机程序,自动填写网页表格来创建垃圾留言,而这些

bots

生成垃圾留言的速度远甚于人类的审查速度。

要打造公平的环境,您可以采取措施,确保只有人才能使用您网站上那些有可能产生垃圾留言的功能。要确定哪些访客是人类,一种方法就是使用

CAPTCHA

,其全称是

“全自动区分计算机和人类的图灵测试”(

completely automated public Turing test to tell computers and humans apart

)。

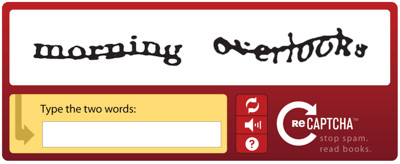

典型的

CAPTCHA

包含一张扭曲的字母图片,人类可以看懂,而计算机则无法轻易理解。举例如下:

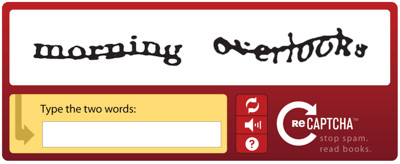

通过使用

Google

免费的

reCAPTCHA

服务,您可以轻松地在您自己的网站上利用这项技术。

reCAPTCHA

的一个独特之处在于,

该服务所采集的数据被用于改善扫描书籍或报纸等文字的进程。通过使用

reCAPTCHA

,您不但能保护自己的网站不受垃圾留言散播者的侵扰,还能帮助我们实现全球书籍的数字化。

如果您想在自己的网站上免费使用

reCAPTCHA

,可

在此注册

。我们

提供插件程序

,以便您在

WordPress

和

PHP

等热门应用软件和编程环境中轻松安装这项功能。